Will AI Govern the World? Power, Trust, and the Future of Human Freedom

Introduction: The Age of Distrust

We live in an era of extraordinary capability and extraordinary suspicion.

Artificial intelligence can model climate futures.

Blockchain can track financial flows.

Data networks connect billions of minds.

And yet…

Trust feels fragile.

Scandals involving powerful individuals, opaque institutions, and elite networks have amplified a growing sentiment:

Are our systems still fair?

Or are they protecting themselves?

When accountability appears uneven, the ground beneath democracy begins to tremble.

This is not paranoia.

It is a crisis of confidence.

And it leads to a provocative question:

If human power structures are flawed, should artificial intelligence eventually manage Earth’s resources instead?

The Temptation of Algorithmic Governance

Imagine a superintelligent system tasked with:

Optimizing food distribution

Balancing global energy grids

Detecting corruption patterns

Modeling climate mitigation in real time

Allocating resources for maximum collective welfare

No ego.

No greed.

No backroom deals.

A planetary operating system.

The appeal is obvious: remove human self-interest from decision-making.

But here lies the first paradox.

AI does not eliminate values.

It encodes them.

Who decides what “maximum welfare” means?

Economic growth?

Equality?

Liberty?

Environmental preservation?

Long-term survival over short-term comfort?

These are philosophical trade-offs, not engineering problems.

Replacing elites with algorithms does not remove power.

It relocates it.

We Are Not Angels (But We’re Not Cavemen Either)

There is a common frustration:

“We are still cavemen running planetary systems.”

Biologically, this isn’t wrong.

Our brains evolved for tribal survival, not global governance.

But history complicates the narrative.

Compared to two centuries ago:

Extreme poverty has dramatically declined.

Literacy is widespread.

Slavery is outlawed globally.

Women vote across most of the world.

International humanitarian law exists.

Humanity is morally inconsistent, not morally stagnant.

Civilization progresses not by eliminating bad actors,

but by constraining them.

The rule of law exists because humans are flawed.

The real issue today is not that evil exists.

It is whether accountability applies equally.

When it doesn’t, trust erodes.

Collapse or Recalibration?

The fear of societal collapse often arises in the context of trust deficits.

History shows that collapses are rarely cinematic explosions.

They are gradual erosions:

Institutional gridlock

Polarization

Declining legitimacy

Information fragmentation

But trust troughs have historically preceded reform.

The Great Depression reshaped economic regulation.

Watergate reshaped political oversight.

Financial crises reshaped banking governance.

Pressure can destabilize or catalyze structural redesign.

The outcome depends on participation, not inevitability.

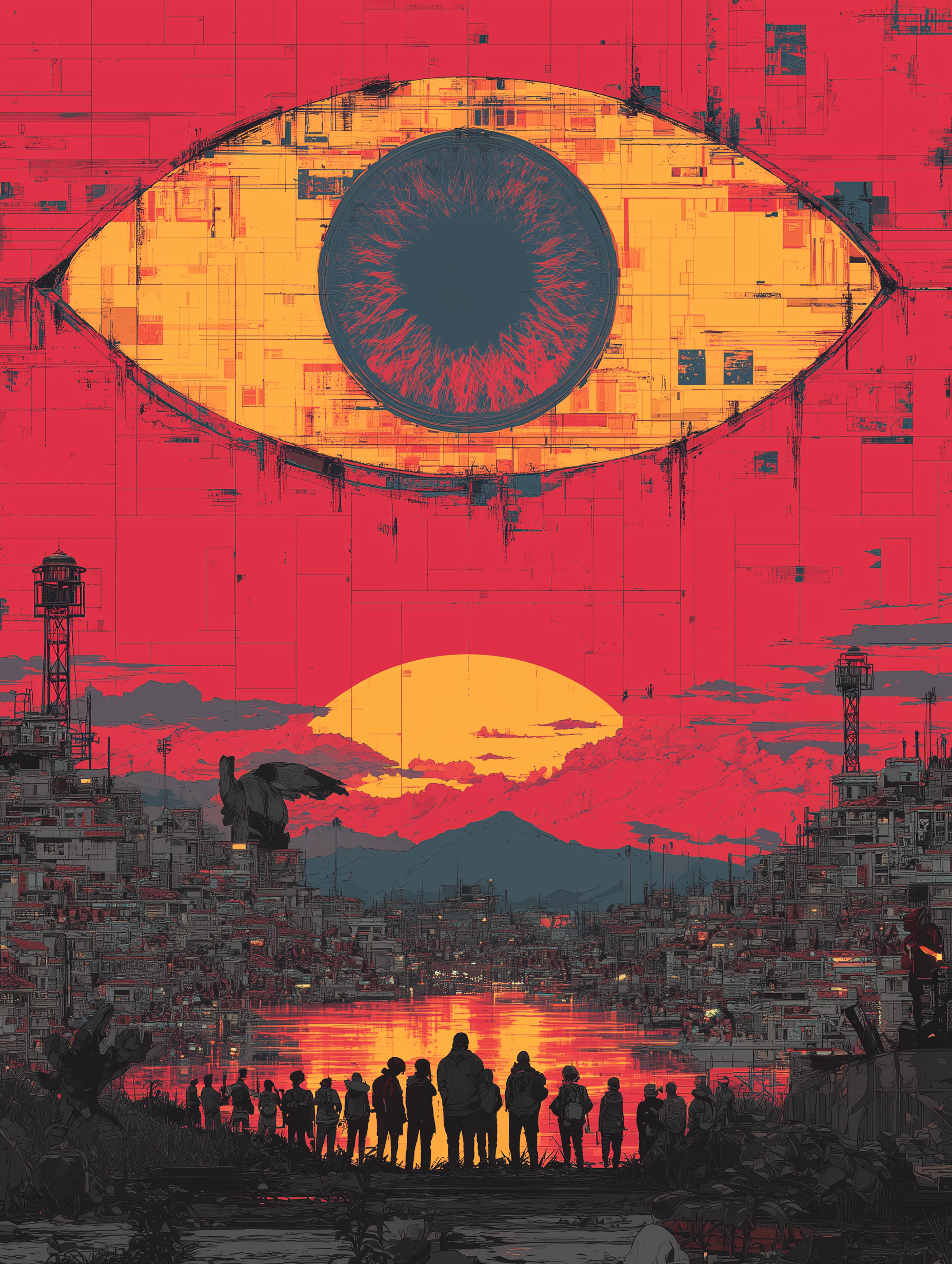

The Hybrid Model: AI as Illumination, Not Ruler

If freedom is non-negotiable, fully automated governance is dangerous.

But AI can serve a different role.

Not sovereign.

Not a dictator.

But the illumination engine.

Imagine:

Public dashboards tracking inequality metrics in real time.

AI detecting statistical bias in judicial sentencing.

Environmental impact transparency per corporation.

Lobbying networks are visualized publicly.

Open-source algorithm audits are required by law.

AI as an exposure system.

AI as a bias detector.

AI as a corruption alarm.

Humans retain final decision-making authority.

Transparency becomes infrastructure.

Power finds it harder to hide.

The Real Threat: Cynicism

Societies do not collapse merely because bad actors exist.

They collapse when enough people withdraw.

Cynicism accelerates entropy.

When citizens believe:

“Everything is rigged.”

“Participation doesn’t matter.”

“Power is permanently captured.”

…they disengage.

And disengagement weakens institutions more than corruption does.

The antidote is not utopian faith.

It is structural friction against predation.

Bad behavior must become expensive.

That requires:

Distributed oversight

Open data systems

Civic education

Technological transparency

Cross-border interdependence

None of these requires moral perfection.

They require design.

The Freedom Equation

There is an unavoidable tension.

Total optimization often demands reduced autonomy.

But freedom includes risk.

A society with zero corruption and zero autonomy is not free.

A society with absolute freedom and zero constraint collapses.

The balance lies in:

Constraining abuse

Preserving pluralism

Embedding transparency

Preventing power concentration

AI can strengthen the architecture.

But it cannot replace moral responsibility.

Conclusion - We Are Not Pre-Moral. We Are Transitional.

The discomfort we feel when justice appears uneven is evidence of evolution.

Cavemen admire dominance.

Civilized minds question it.

The future is unlikely to be:

AI tyranny

Elite immortality

Total collapse

It is more likely to be a turbulent recalibration.

The real question is not:

“Will AGI save us?”

But rather:

“Can we design systems that make exploitation harder, freedom durable, and transparency unavoidable?”

AI may assist.

But the architecture of trust remains a human responsibility.

We are not angels.

We are not savages.